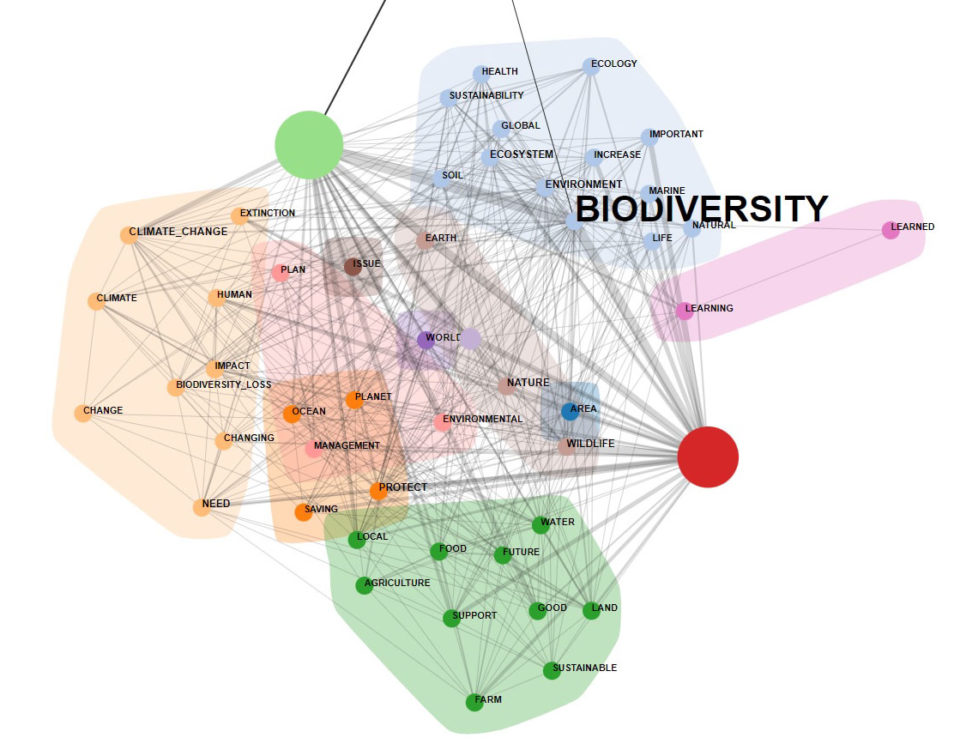

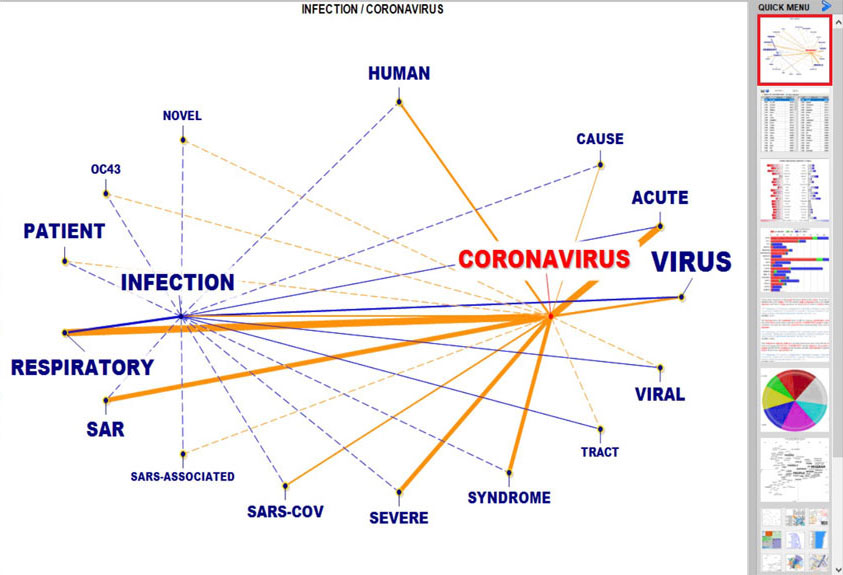

The new analysis options include various clustering methods, measures like Assortativity Coefficient, Clustering Coefficient, Entropy, Positive Pointwise Mutual Information and also five Centrality measures.

When using the Co-Word Analysis tool, a new interactive dendrogram is available which allows the user to explore the relationships between up 3,000 (three thousand) key-words...

Now the Corpus Builder tool allows one to easily import data in three further formats: .SAV (i.e. Spss files), .JSON (e.g. Twitter data) and .XML. Moreover, the process through which T-LAB generates a corpus from a data table with thousands of records is faster.

Latest update (5 May 2022): automatic lemmatisation for Latin language added...

A new wizard has been added which allows one to easily classify new documents according to a pre-existing model and also to compare any new document with all documents included in a corpus already analysed ...

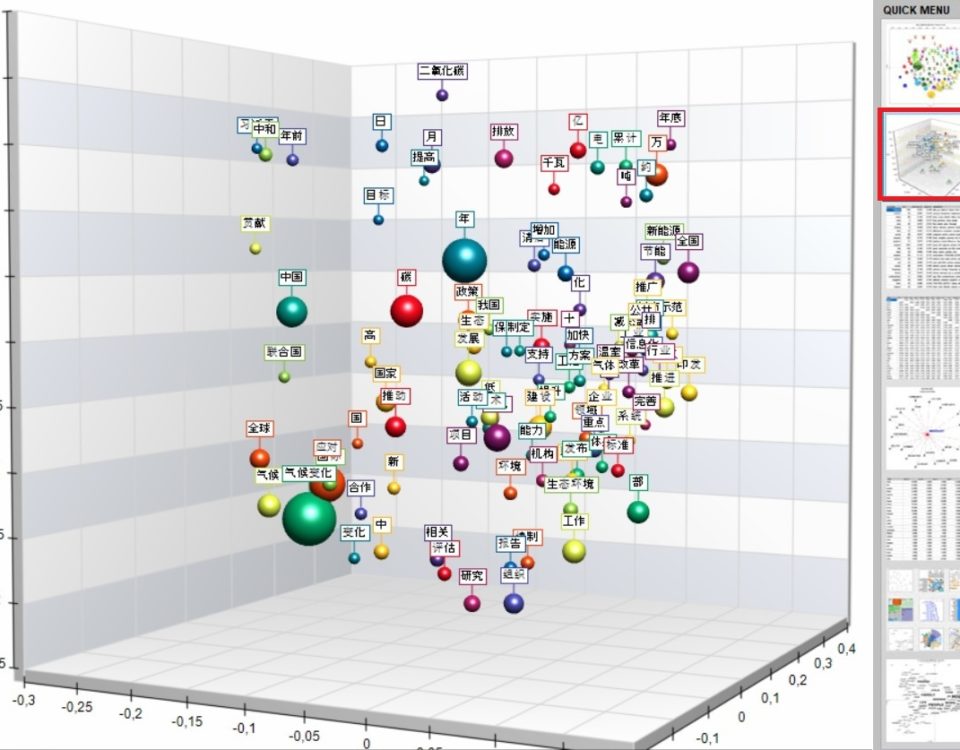

All T-LAB tools which include the 3d bubble chart option now use a new type of visualization which is highly customizable.

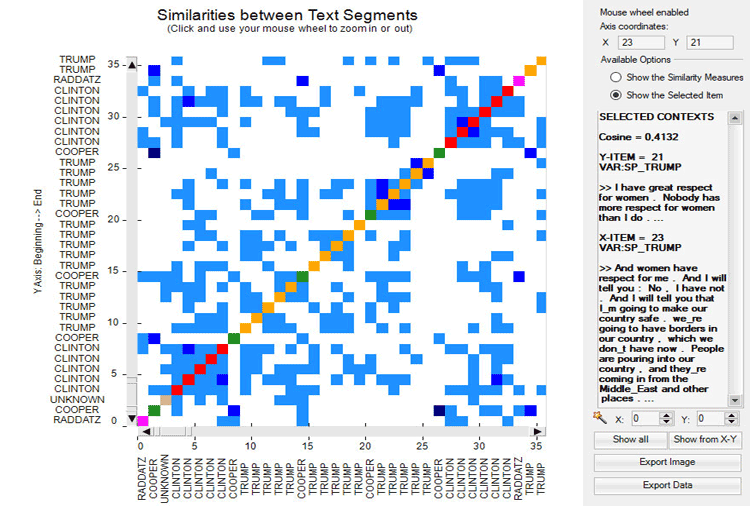

A new tool named ‘Texts and Discourses as Dynamic Systems’ has been added which integrates various state of the art algorithms already present in T-LAB with the Recurrence Quantification Analysis...

The Concordances tool now includes a new option which allows the user to build/explore a dynamic Word-Tree of any selected item;

The way the Corpus Builder tool manages the CSV files in different languages has been significantly improved ...